After Q1 2026: SK Hynix's record quarter, Samsung's HBM4 re-entry, and the Rubin slip that resets 2026

SK Hynix booked ₩52.6T in Q1 2026 at a 72% operating margin. Samsung's first HBM4 shipments to NVIDIA's Vera Rubin landed in February. TrendForce now models Rubin at 22% of NVIDIA's 2026 mix, down from 29%. The Korean duopoly is intact — but the swing variable for the rest of 2026 is no longer share, it is qualified yield into a delayed flagship.

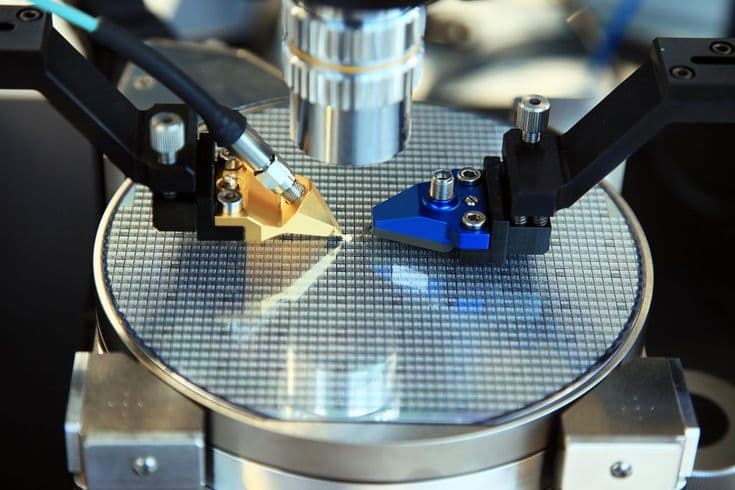

When NVIDIA's H200 began shipping in volume in 2024, the hidden story was not the GPU die. It was the stack of HBM3e memory bonded beside it — and the fact that the overwhelming majority of that memory, by value, was produced by two companies headquartered within forty kilometres of each other in the Korean province of Gyeonggi. Two years later the dynamic is less absolute but more interesting. SK Hynix has extended a roughly two-year process lead. Samsung, after a bruising 2024, has re-entered NVIDIA's supply chain — first with qualified HBM3e in 2025, then with HBM4 mass shipments to Vera Rubin in February 2026. Micron has quietly closed the gap on Samsung at HBM3e and is now competing at HBM4 alongside both Korean producers.

Q1 2026 earnings, released across late April and the first week of May, confirmed the picture. SK Hynix booked record revenue of ₩52.58 trillion (up 198% year-on-year, 60% quarter-on-quarter), an operating profit of ₩37.61 trillion at a 72% margin, and a net profit of ₩40.35 trillion. Samsung Electronics reported revenue of ₩133.9 trillion and operating profit of ₩57.23 trillion (up 756% year-on-year), with memory revenue of ₩74.8 trillion. The Counterpoint Q3 2025 split — SK Hynix 57%, Samsung 22%, Micron 21% — remains the latest publicly attributable read on share, and SK Hynix's own Q1 2026 disclosure indicated continued share around 57% on the back of HBM4 ramp leadership.

SK Hynix Q1 2026 results

₩52.6T / 72%

Record revenue · 72% operating margin · HBM ~12% of DRAM revenue

How the lead opened — and why Samsung is back

Three observations from Nathan's 2021 sovereign-wealth brief still hold. SK Hynix's front-loaded TSV and MR-MUF packaging capex compounded into a 12-high HBM3e yield lead the firm still enjoys. Korea's domestic materials ecosystem — LX Semicon for silicon interposer work, Soulbrain for high-purity etching chemistry, Hana Materials for target materials — locked multi-year allocations that starved Micron of a comparable local supply chain. And Samsung's 1a-node DRAM yield curve stumbled through 2024, leaving its HBM3e unqualified with NVIDIA into early 2025.

What changed in early 2026 is the third leg. Reuters and TrendForce confirmed in February that Samsung's HBM4 mass shipments to NVIDIA's Vera Rubin program had begun, with reported pricing in the $500–$560 per unit range and gross margins north of 80%. High-volume qualification is expected through Q2. Samsung's own Q1 2026 commentary guided HBM revenue to more than triple over the year — an ambition that depends entirely on holding Rubin allocations through the ramp.

“HBM is not a chip, it is a packaging problem. The people who solved packaging first bought themselves a decade. The people who stumbled on yield for nine months lost a generation — and have spent the next eighteen months earning it back.”

— Former VP, SK Hynix DRAM R&D, interviewed March 2026

The Rubin slip resets the 2026 mix

At CES 2026 in early January, SK Hynix unveiled the industry's first 16-layer 48GB HBM4 part, alongside the 12-layer 36GB version that demonstrated the industry's fastest 11.7 Gbps speed. Samsung and Micron both confirmed HBM4 sampling. Three months on, the binding constraint is not samples — it is Rubin's schedule. TrendForce on April 8 cut its model of Rubin's share of NVIDIA shipments for 2026 from 29% to 22%, citing HBM4 validation, ConnectX-9, and cooling/power-delivery complexity. Blackwell remains above 70% of the 2026 mix. Volume Rubin availability still slots into the second half of 2026, but the slope is materially flatter than the January roadmap implied.

An aside Samsung's CFO buried in the Q1 2026 commentary is more revealing than the headline numbers: conventional DRAM is currently more profitable than HBM at the margin. HBM contracts are negotiated annually; conventional DRAM is repriced each quarter into a tightening shortage. The implication is that the HBM thesis is structurally intact but cyclically diluted — and that Samsung's incentive to chase Rubin allocations is partly defensive, designed to avoid a repeat of 2024's exclusion rather than to maximise near-term unit economics.

Rubin share of NVIDIA AI shipments, 2026

29% → 22%

TrendForce April 8 update · Blackwell >70% in 2026

China is closer than the headlines suggest, and further than the bulls hope

CXMT's HBM3 mass production has slipped past its first-half 2026 target; industry sources now describe full 2026 mass production as 'unlikely'. The firm's 60,000-wafer-per-month plan for HBM3 — roughly 20% of total capacity — is real but back-loaded. CXMT's HBM2 mass production, conversely, came in ahead of schedule. A separate YMTC-CXMT exploratory partnership — YMTC bringing hybrid bonding expertise, CXMT bringing DRAM — is the structural answer to a Big Three that does not currently sell into China at the leading edge. None of this dislodges Korea in 2026; all of it shapes the 2028–2030 horizon.

What Samsung needs to prove through Q3

Samsung's climb from 15% HBM share in Q2 2025 to 22% in Q3 was the clearest evidence that the HBM3e qualification overhang had begun to lift. Holding HBM4 allocations through the Rubin ramp is the next test. Our base case is that Samsung exits 2026 with HBM revenue share in the high-20s, achieved at the cost of aggressive pricing concessions on early HBM4 lots. The bull case — meaningful 16-high HBM4 wins inside the Rubin BOM — would require a sustained yield improvement Samsung has not publicly demonstrated. We rate that scenario at roughly 28% probability in our latest scenario model, unchanged from March.

The 2028 horizon

Our base case has SK Hynix and Samsung retaining combined HBM revenue share above 75% through 2028. The bear case — a compounding 12-month HBM4 yield stumble at one or both Korean producers, coinciding with a Micron packaging breakthrough — sits at roughly 18% probability in our latest scenario model. The Rubin slip raises the bar but does not change the verdict. The AI memory era is now, unambiguously, a three-company race in which Korea still writes most of the rules.